Humans are (still) the hard part

I’m writing this blog without AI. I’m not sharing that to be contrarian or to toot my own horn as a writer. The act of writing out my thoughts and then getting them organized has always helped me to clear my head and grant myself focus. My thoughts have been quite jumbled as of late, and while having 2 kids under 2 is a contributing factor, I also haven’t been using writing as a tool for my own mental clarity recently. Instead I’ve been asking AI tools to organize my stream of consciousness, which is great for getting organized quickly but less so for actually internalizing order brought to chaos. And boy is there a surplus of chaos in my world today.

The improvements brought about from AI are revolutionary and accompanied by a fair share of negative side effects for humans. If we ignore those side effects completely, they are likely to snowball to a point of impacting the wellbeing of our teams.

AI may have changed what the day to day of being an engineer looks like, but it won’t change the underlying factors that foster high-performing teams – the most important being psychological safety. A culture where people “feel safe to take risks and be vulnerable in front of each other”[1] both enables high-performing teams, and also alleviates much of the pain that can come from large changes. Technology has always been an industry of consistent change, but the pace of those changes is far more rapid these days, and the people who make up our teams are all feeling the impacts to varying degrees.

My brain every time I open my news feed in 2026

Right now, I don't have a hot take about AI. I do have a team full of humans who are navigating through hard changes, and I want to figure out good ways to support them. I'm writing this to help me make sense of the signals I'm seeing, and if you're reading this it's because I managed to organize my thoughts well enough to think it might be helpful for others.

Anxiety Ahoy

When I went on maternity leave in October 2025, I had no idea that I would be coming back to a world in software engineering that felt nigh unrecognizable to me. I’ve always struggled with imposter syndrome (or have I just tricked people into thinking I can do this for a decade?), but as I attempted to dive in to all of the AI tooling developments I had missed in 3 months of newborn snuggles and sleepless nights, I was daunted by anxiety like I’ve never experienced in my career. I would dig in, get past one mental hurdle of figuring out how to bend the agents to my will, then be bombarded with 12 new features that were released last week that I should probably already be using. I got through my local development setup with 100x fewer questions to my teammate, Cameron, just by talking to Claude, reviewing and then approving the fixes it recommended step by step. Then when I came up for air and looked around everyone was already parallelizing agents and having them run semi-autonomously. Before I could spend too long worrying about whether or not I was too far behind to ever catch up, Claude released a feature that did a lot of the parallelizing with subagents for you.

I brute forced my way through the anxiety period with exposure therapy (“Claude, teach me how to use you.”) but I felt it relevant to share my own experience because I am certain I’m not the only one who has felt overwhelmed by all of the “new”. When you combine the learning curve with identity disruption (See headlines like: “‘Engineer’ is so 2025. In AI land, everyone’s a ‘builder’ now”[2] and “I don’t know if my job will still exist in ten years”[3]), comparison pressure (How am I ever going to catch up to the AI power users?), companies continuing to attribute mass layoffs to AI adoption (oh look, the consequences of their own actions[4]), the potential for skills to atrophy[5], increased burnout[6], eroding trust in corporate leaders[7]…you’ve got a decent recipe for panic attack soup.

Changing Dynamics

Meanwhile, the dynamics of how our teams work together are changing as a byproduct of AI as well.

Increased Isolation for Remote Teams

My colleague, Michelle Collums, captured the potential for isolation increases really well in her LinkedIn post:

To quote myself in the previous section of this blog, “I got through my local development setup with 100x fewer questions to my teammate, Cameron”. Was that probably better for Cameron’s focus and productivity? ABSOLUTELY. Cameron also puts up with my random side messages and banter even without me needing to ask him a question, because we have a foundation of years of working together and sharing stories of our kids being obsessed with K-Pop Demon Hunters in place. However, for those who are ramping up on a new team or joining the company as a new hire, the relationships that may have once formed more organically around knowledge sharing now require more intention to create and maintain. Even established teams, like Michelle points out, may feel a decrease in collaboration as a byproduct of having a robot assistant with access to the code and our knowledge base at their fingertips.

Cognitive Offloading Risks

The reason writing works as a tool for me can be attributed to a cognitive psychology phenomenon known as the generation effect.

Explaining what we hear in our own words or writing it down are forms of generation. Generation solidifies schemas in our brains. [8]

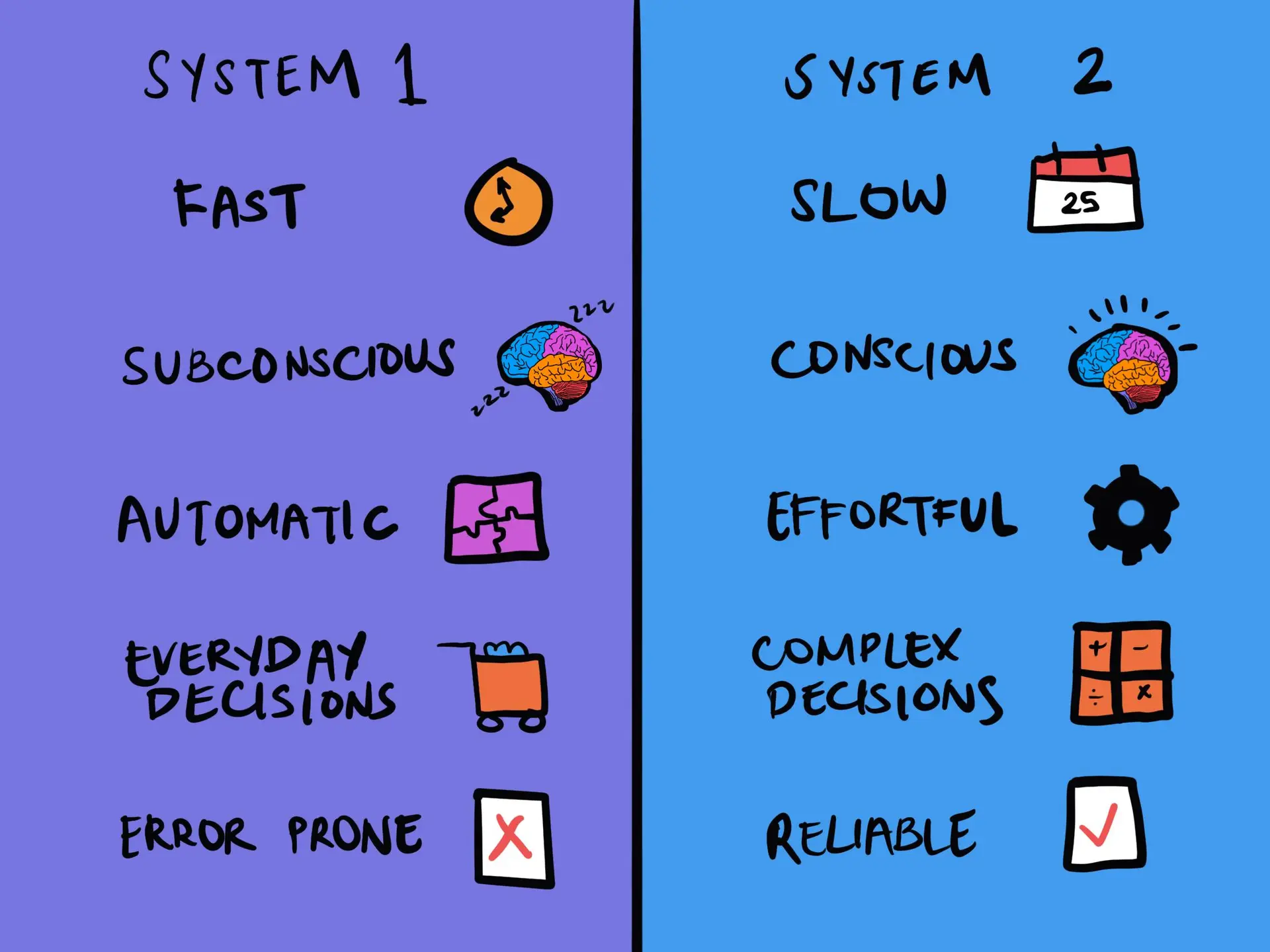

The author of the article quoted above also examines the way AI has introduced a third mode of thought to the two that Daniel Kahneman introduced in Thinking, Fast and Slow.

System 3 relies on “artificial cognition”, with the adoption of AI.

This mode of working involves cognitive offloading and surrender. Offloading is when we delegate a cognitively intensive task to System 3. Cognitive surrender is the failure to apply System 2 reasoning to verify what System 3 produces. Offloading is not a problem by itself, but surrendering is. In this process, what our brain would learn is how to use AI to get an answer, but in doing so, we would sacrifice critical thinking and accountability for the output.[8]

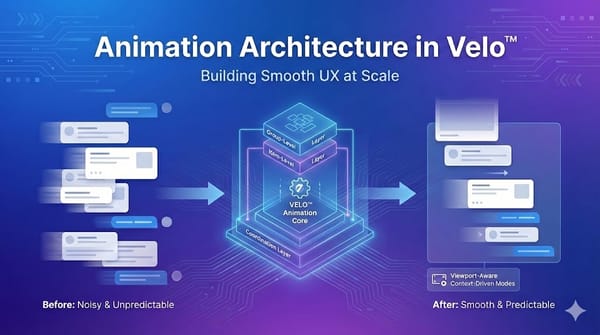

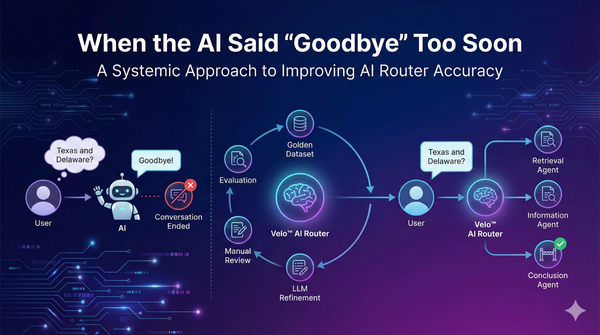

One example of System 3 “thinking” is vibe coding. To be clear, “vibe coding is not the same as AI-Assisted engineering.”[10] Vibe coding is high level prompting without a focus on the underlying code that is generated, while AI-Assisted engineering keeps the disciplines of engineering necessary to produce production grade software front and center.

Crucially, the big difference here is the human engineer remains firmly in control, responsible for the architecture, reviewing and understanding every line of AI-generated code, and ensuring the final product is secure, scalable, and maintainable.[10]

I see a few potential points of friction bubbling up in this space…

- Engineers have to ensure that we review our own code first. This has always been the case but was a bit easier when we had the awareness of what lines we actually changed or wrote opposed to what was generated by Claude behind our prompting. We need to ensure that we don’t pass along the burden of the first pass code review to our teammates - if we do, that will erode trust over time. No one gets excited to review massive, unvetted AI-generated PRs.

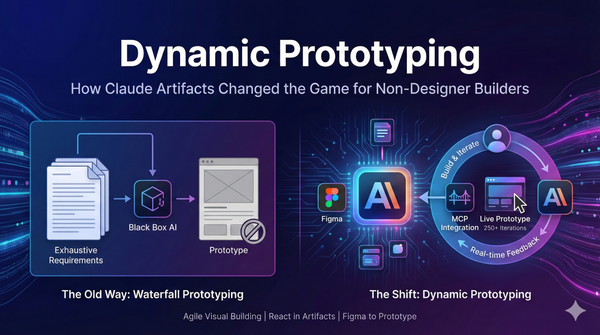

- Non-engineer submitted PRs are becoming a reality. So much so that people are already marketing SaaS solutions for the problems that may arise.[11] We need to have the trust built up within our teams for engineers to be able to hold the line on quality without fearing potential backlash for upsetting a designer, product manager, TPM, engineering leader who hasn’t been hands on in the code recently, etc. We have to treat these code contributions the same way we would any other innersource contribution. “You build it, you run it” may not apply in quite the same way, but the stewards of the codebase are the ones on the hook for it when things go sideways, and therefore must own the final say on what gets merged and deployed.

Psychological Safety

So what can we do about this? Well, as leaders, our remit stays the same. We want to build high performing teams and psychological safety is the foundation of a high performing team. Culture in a company is a living thing that we all contribute to, but leaders play a key role in ensuring we create psychologically safe spaces for our team members.

I still get excited about new tech. I enjoy the debates we have during cross-team architecture reviews. I love the dopamine hit I get from actually being able to contribute code between calls (outside of the critical path) with Claude reducing the cognitive switching and ramp up previously required to do so. But the part of my job that has brought me the most joy, and is arguably the most important, is taking care of the people. And right now I don’t think AI is capable of replacing that job function. I could be wrong and it will prove to be a better human than humans, but since I still get irate when I have to talk to a robot instead of a person on the phone, I think we’ve got some runway there.

So what are actionable takeaways here, aside from the fact that Claude probably would have structured these thoughts more efficiently than I did?

- Keep a desire to foster psychological safety at the forefront of our interactions with one another. If you're looking for a starting point, this list from The Psychological Safety Collective is a good one.

- Be intentional about making time for humans. Save space for getting to know your coworkers. Create a spot for banter - at ZenBusiness, our Platform team has a whole slack channel dedicated to non-work topics (#platform-feelings).

- Remember we are all going through this phase of rapid change together, and no one has been through this specific technology shift before. Find ways to encourage and support one another. The world is hard enough, but it gets a bit easier when we feel like we can be authentic and real with the people we spend the bulk of our time with at work.

- Start watching for signals around the bottleneck shifting to code review rather than code production, and think through ways we can alleviate that before it becomes too painful.

- Find ways to have fun and let the chaos entertain you - /r/vibecoding is a treasure trove of laughs.

- Don’t take yourself too seriously. I didn’t use AI to write this blog, but I consulted it on the title - I’m awful at coming up with titles.

Written by a human, for humans, about humans ❤️

References

[1] Google's Project Aristotle - Psychological Safety Collective

[2] "'Engineer' is so 2025. In AI land, everyone's a 'builder' now" - SF Standard

[3] "I don't know if my job will still exist in ten years" - Sean Goedecke

[4] The Cost of AI Layoffs - Careerminds

[5] AI Assistance and Coding Skills - Anthropic Research

[6] "The AI Vampire" - Steve Yegge

[7] The AI Trust Gap - People Managing People

[8] "Ride the Wave, Don't Drown" - Subbu Allamaraju

[9] System 1 and System 2 Thinking - The Decision Lab

[10] "Vibe Coding Is Not the Same as AI-Assisted Engineering" - Addy Osmani

[11] "Your Next Pull Request Will Come from a Product Manager" - Signadot